Stephen Few has published an article today criticising the state of research in the information visualisation field. I’m a great admirer of Stephen. His work was largely the reason I discovered this subject, he’s a terrific guy to meet in person and I have huge respect for the clarity and conviction of his principles and his willingness to speak out. However, I can’t agree with the essence of some of his arguments today about research.

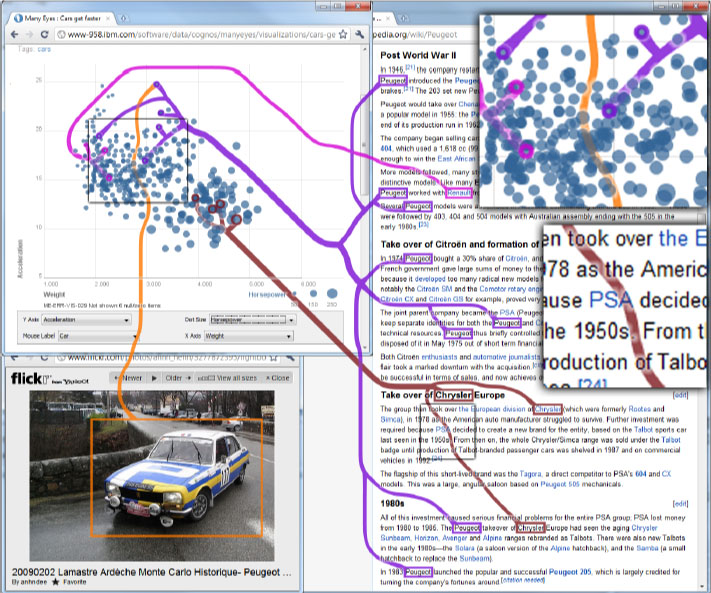

Stephen refers to his standing complaint about the quality of research and cites an example of this through the “InfoVis Best Paper” award that was given at this year’s conference around “Context-Preserving Visual Links”:

…these visual links are almost entirely useless for practical purposes.

Before I worked in a University environment (albeit not as an academic/researcher) I had the uninformed view that research appeared extremely disorganised, inefficient and not greatly consequential – ‘all this valuable charity or government money going on people’s elaborate hypotheses or minutiae interests’ – and so, in the past, I would have probably agreed with this sentiment:

I’ve often complained that much information visualization research is poorly designed, produces visualizations that don’t work in the real world, or is squandered on things that don’t matter. To some degree this sad state of affairs is encouraged by the wayward values of many in the community.

However, what I didn’t appreciate was the importance of the research ‘system’, the bigger picture of this complex beast, beyond the isolation of individual studies projects, and their fundamental interconnectedness. Regardless of whether a project unearths the discovery of a paradigm shift in knowledge, it still has an important role as an informing and inspiring agent for future researchers who comes across that study.

Ideas, methods and conclusions explored in even the most inconsequential study can spark ideas in somebody else which creates a sequence of knowledge we can’t plan for. It might have determined nothing of intellectual importance or practical value, but the fact that it arrived at that outcome is still of importance to others in a “there’s nothing to see down there, move along” kind of way.

Stephen takes a swipe at the information visualisation research community and its focus on the creation of technology to the detriment of “the purpose of information visualization, which is to help people think more effectively about data, resulting in better understanding, better decisions, and ultimately a better world“:

Technical achievement should be rewarded, but not technical achievement alone. More important criteria for judging the merits of research are the degree to which it actually works and the degree to which it does something that actually matters.

I can’t comment on whether the example he has focused on here will achieve this or not, but if we refer to the definition of information visualization, as offered by Shneiderman, Card and Mackinlay offered as “the use of computer-supported, interactive, visual representations of abstract data to amplify cognition”, there is a clear statement about the prominence of technology in this field. Indeed 99% of visualisation design is carried out using technology, often through a suite of many different packages for each project. The importance and value of pushing the boundaries of technology solutions and concepts will be clear to many practitioners, especially those developers who seek to construct new programming solutions for the projects they work on, and each research development or concept followed will surely enrich this directly or indirectly, regardless of whether it works or matters.

Finally, Stephen expresses discontentment with the role of academic supervisors, allowing researchers to direct their efforts in a misapplied ways and leading to results that can’t be meaningfully applied to information visualisation:

My intention here is not to devalue the talents of these researchers, and certainly not to discourage them, but to bemoan the fact their obvious talents were misdirected. What a shame. Why did no one recognize the dysfunctionality of the end result and warn them before all of this effort was…I won’t say wasted, because they certainly learned a great deal in the process, but rather “misapplied,” leading to a result that can’t be meaningfully applied to information visualization.

…Information visualization research must be approached from this more holistic perspective. Those who direct students’ efforts should help them develop this perspective. Those who award prizes for work in the field should use them to motivate research that works and matters. Anything less is failure.

It strikes me that much of the research process is incredibly non-linear, unpredictable and serendipitous, despite the intent of best design (how many of us would still be around if it wasn’t?). Isn’t part of the wonder and complexity of research the fact that it can’t be boxed in to a simplified, binary state of success or failure?

What do you think?